Understanding Non-Printable Characters in ASCII

What are Non-Printable Characters?

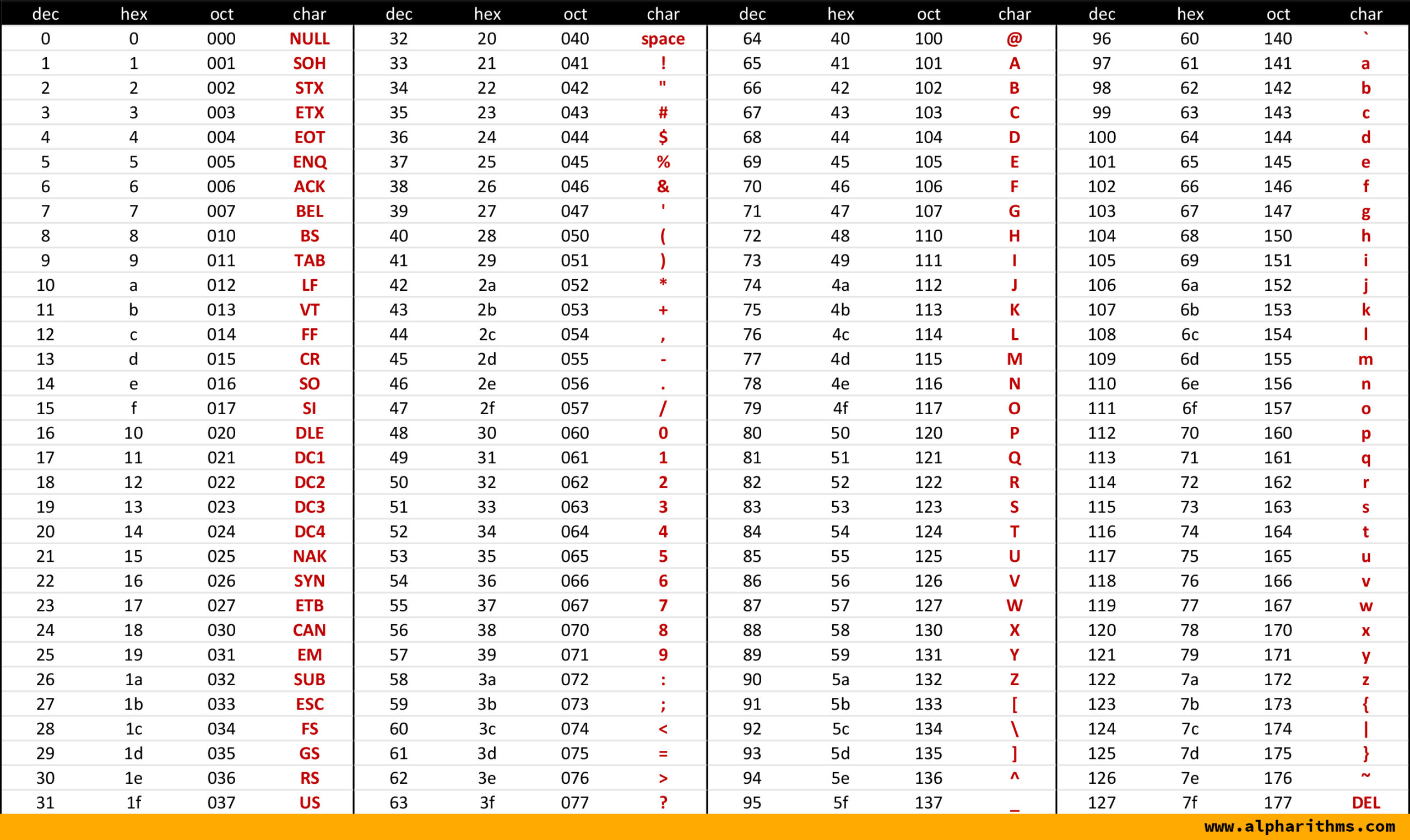

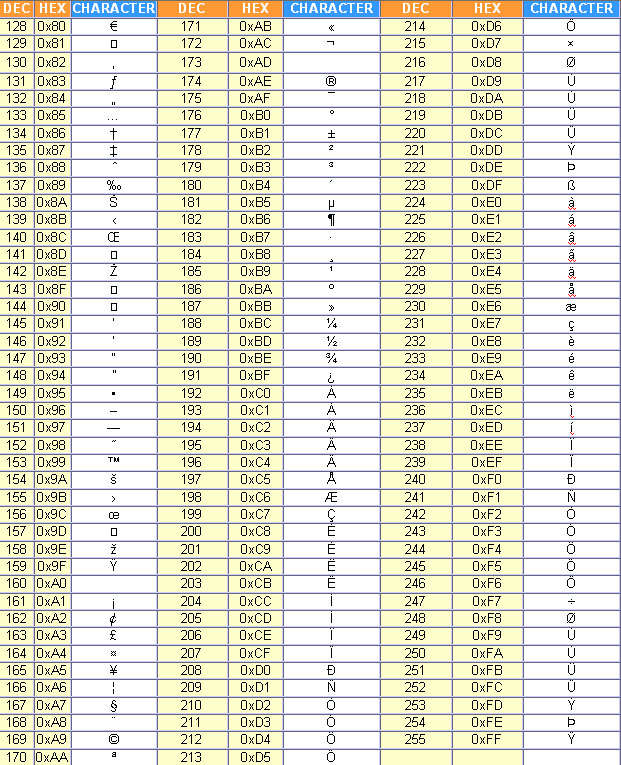

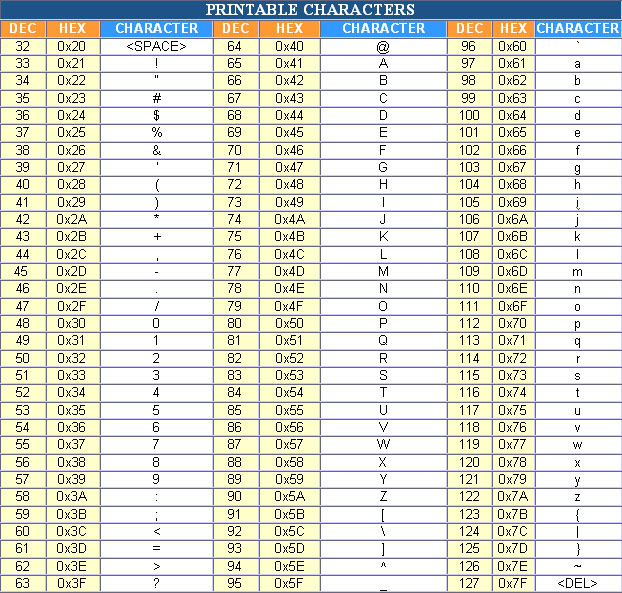

In the world of computing and programming, ASCII (American Standard Code for Information Interchange) is a character encoding standard that assigns unique codes to characters, including letters, digits, and symbols. However, not all ASCII characters are printable, meaning they do not have a visual representation on the screen. Non-printable characters, also known as control characters, are used to control the flow of text, data, and other operations within a computer system.

Non-printable characters are used for various purposes, such as formatting text, controlling the cursor, and signaling the end of a file. They are also used in programming languages to perform specific tasks, like inserting a new line or tabulating data. These characters are usually represented by their ASCII code values, which range from 0 to 31, and 127.

Uses of Non-Printable Characters

What are Non-Printable Characters? Non-printable characters are a set of ASCII characters that do not have a visual representation on the screen. They are used to perform specific functions, such as controlling the flow of text, data, and other operations within a computer system. These characters include null (0), start of heading (1), start of text (2), end of text (3), and many others. Each non-printable character has a specific purpose and is used in different contexts, such as in programming languages, data transmission, and file formatting.

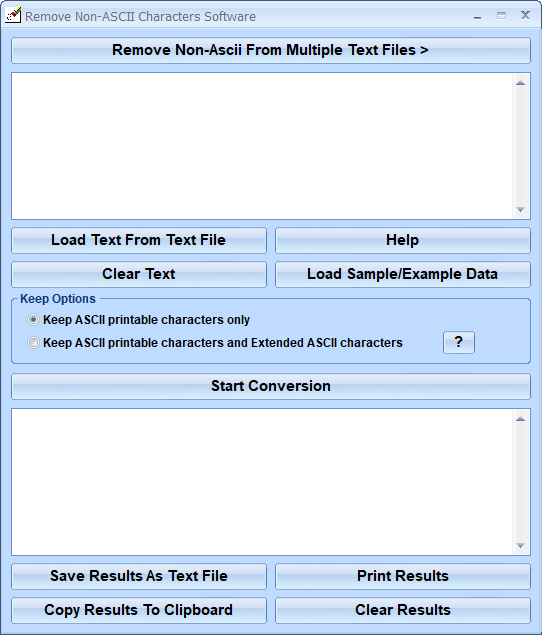

Uses of Non-Printable Characters The uses of non-printable characters are diverse and widespread. They are used in programming languages, such as C, Java, and Python, to perform specific tasks, like inserting a new line or tabulating data. Non-printable characters are also used in data transmission to control the flow of data and ensure that it is transmitted correctly. Additionally, they are used in file formatting to signal the end of a file or to separate different sections of a file. In conclusion, non-printable characters play a crucial role in computing and programming, and understanding their uses and importance is essential for anyone working in these fields.